Development of Multi-Node Monitoring System

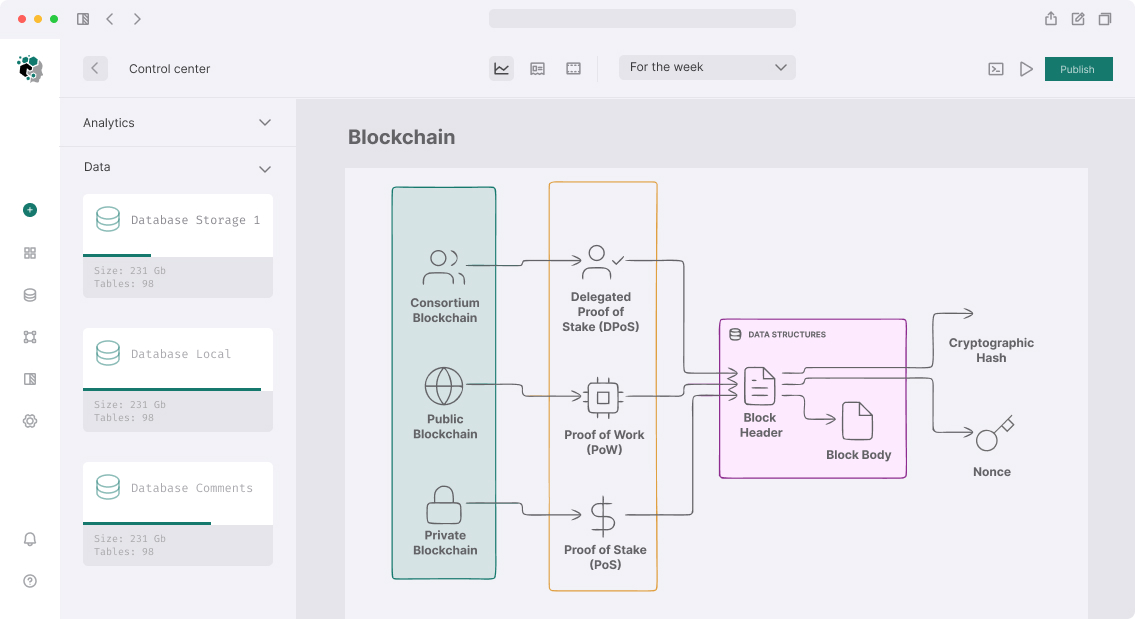

Monitoring blockchain nodes isn't about "set up Prometheus and forget it". Blockchain-specific metrics fundamentally differ from standard server metrics: a node can be completely alive from a process perspective but lag 10000 blocks behind the chain and quietly serve outdated data to clients. Standard uptime monitor won't catch this.

The task becomes more complex with multi-network infrastructure: Ethereum full node, BSC validator, Solana RPC, Cosmos validator — each has its own telemetry, RPC methods for state checking, critical metrics.

What Actually Needs Monitoring

Blockchain-Specific Metrics

Block height lag — lag behind network. Most critical metric. Node is alive but lagged — for RPC service this is critical (clients get stale data), for validator — slashing risk.

// Check lag for EVM-compatible node

async function checkBlockLag(nodeRpc: string, referenceRpc: string): Promise<number> {

const [nodeBlock, referenceBlock] = await Promise.all([

getBlockNumber(nodeRpc),

getBlockNumber(referenceRpc), // public endpoint as reference

]);

return referenceBlock - nodeBlock;

}

async function getBlockNumber(rpc: string): Promise<number> {

const response = await fetch(rpc, {

method: "POST",

body: JSON.stringify({ jsonrpc: "2.0", method: "eth_blockNumber", id: 1 }),

headers: { "Content-Type": "application/json" },

signal: AbortSignal.timeout(5000),

});

const { result } = await response.json();

return parseInt(result, 16);

}

Peer count — connected peers count. Low peer count (< 5) signals sync problems and potentially isolated node. For Ethereum: net_peerCount. For Cosmos: /net_info via RPC.

Sync status — node in sync mode or already synced. For Ethereum: eth_syncing returns false (synced) or object with progress. Node on sync shouldn't accept production traffic.

Mempool depth — pending transaction count. For RPC nodes, large mempool can indicate processing issues. For Ethereum: txpool_status.

Validator-specific metrics (Cosmos, Ethereum PoS):

- Missed blocks / attestations — missed signatures lead to slashing

- Validator balance (ETH) — below threshold triggers validator ejection

- Double sign risk — monitoring double-sign attempts

Infrastructure Metrics with Blockchain Context

Standard CPU/RAM/Disk metrics are critical but interpreted differently. Ethereum full node consumes 1–2 TB on NVMe (not HDD). Sharp I/O increase may signal active resync. Ethereum under full RPC load consumes 16–32 GB RAM — that's normal, not a leak.

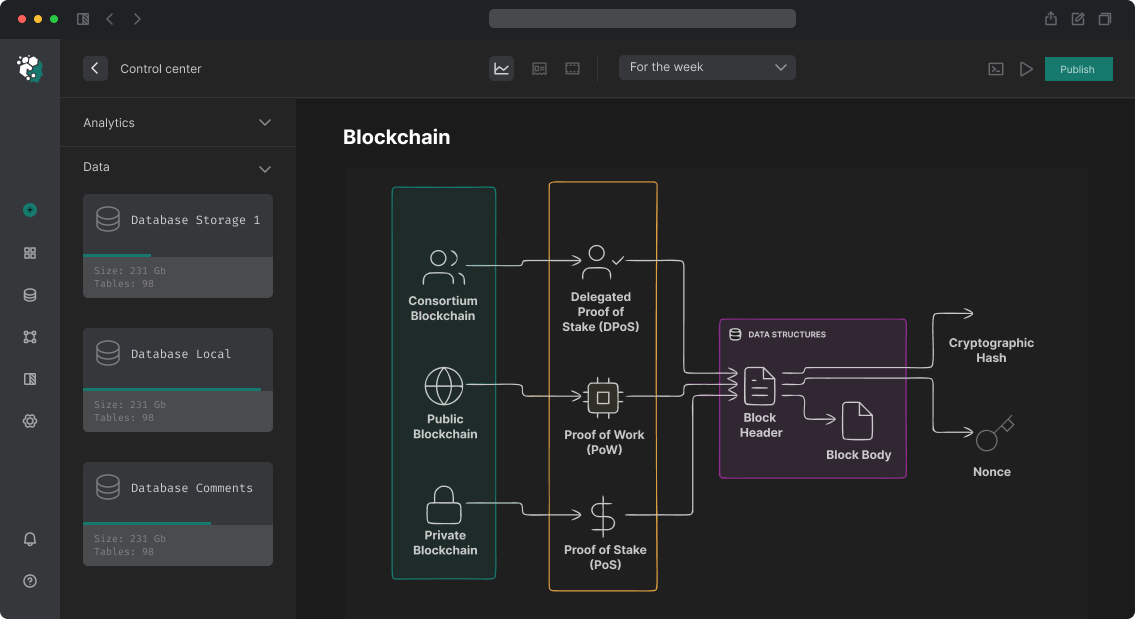

Monitoring System Architecture

Collector Layer

For each node type — specialized collector translating blockchain-specific telemetry to unified format (Prometheus metrics).

// Collector for EVM-compatible nodes (Go)

type EVMNodeCollector struct {

nodeRPC string

referenceRPC string

nodeName string

chainID string

}

func (c *EVMNodeCollector) Describe(ch chan<- *prometheus.Desc) {

ch <- blockLagDesc

ch <- peerCountDesc

ch <- syncStatusDesc

ch <- mempoolSizeDesc

}

func (c *EVMNodeCollector) Collect(ch chan<- prometheus.Metric) {

ctx, cancel := context.WithTimeout(context.Background(), 10*time.Second)

defer cancel()

lag, err := c.getBlockLag(ctx)

if err != nil {

ch <- prometheus.NewInvalidMetric(blockLagDesc, err)

return

}

ch <- prometheus.MustNewConstMetric(

blockLagDesc,

prometheus.GaugeValue,

float64(lag),

c.nodeName, c.chainID,

)

// ... remaining metrics

}

For Cosmos-based nodes — parse /status, /net_info, /validators via RPC. For Solana — JSON-RPC methods getHealth, getSlot, getVoteAccounts. For Bitcoin — getblockchaininfo, getpeerinfo.

Ready exporters vs custom:

-

ethereum-exporter(open source) covers basic EVM metrics -

cosmos-validator-exporter(Frens Validator) — for Cosmos ecosystem - For non-standard protocols (TON, Solana with custom metrics) — write exporter in Go

Aggregation and Storage

Prometheus + VictoriaMetrics for long-term storage. VictoriaMetrics preferable for multi-network operations: better compresses time series, supports federated scraping from multiple Prometheus instances.

# prometheus.yml — scrape config for multi-node environment

scrape_configs:

- job_name: 'ethereum-nodes'

scrape_interval: 15s

scrape_timeout: 10s

static_configs:

- targets:

- 'eth-node-1:9090'

- 'eth-node-2:9090'

- 'eth-node-3:9090'

relabel_configs:

- source_labels: [__address__]

target_label: instance

- job_name: 'cosmos-validators'

scrape_interval: 30s # Cosmos block ~6 sec, 30 sec sufficient

static_configs:

- targets: ['cosmos-val-1:26660', 'cosmos-val-2:26660']

- job_name: 'solana-rpc'

scrape_interval: 10s # Solana ~400ms slot, frequent checks needed

static_configs:

- targets: ['solana-rpc-1:9101']

Alerting

Grafana Alerting or AlertManager. Key principle: different severity for different metrics. Not everything requires immediate response.

| Metric | Warning | Critical | Action |

|---|---|---|---|

| Block lag (EVM) | > 10 blocks | > 50 blocks | Auto-restart or traffic switch |

| Peer count | < 10 | < 3 | Check firewall/network |

| Disk space | < 20% | < 10% | Expand or pruning |

| Validator missed | > 1% | > 5% | Immediately (slashing risk) |

| Memory usage | > 80% | > 95% | Check leaks, restart |

# alertmanager rules

groups:

- name: blockchain-nodes

rules:

- alert: ValidatorMissedBlocks

expr: rate(cosmos_validator_missed_blocks_total[5m]) > 0.05

for: 2m

labels:

severity: critical

annotations:

summary: "Validator {{ $labels.validator }} missing >5% blocks"

description: "Slashing risk. Immediate action required."

- alert: NodeBlockLagHigh

expr: blockchain_block_lag{chain="ethereum"} > 50

for: 5m

labels:

severity: warning

annotations:

summary: "Ethereum node {{ $labels.instance }} lagging {{ $value }} blocks"

Automatic Response

Passive monitoring isn't sufficient for 24/7 production. For critical scenarios — automatic remediation actions.

Auto-failover for RPC nodes. Load balancer (HAProxy/nginx) checks node health endpoint, on failure — automatically excludes from rotation. Blockchain node health check must include block lag check, not just HTTP 200.

# Health check script for HAProxy (called as external check)

import sys

import asyncio

from web3 import AsyncWeb3

MAX_LAG = 20 # maximum acceptable lag in blocks

async def check_node_health(node_url: str, reference_url: str) -> bool:

try:

w3_node = AsyncWeb3(AsyncWeb3.AsyncHTTPProvider(node_url, request_kwargs={"timeout": 3}))

w3_ref = AsyncWeb3(AsyncWeb3.AsyncHTTPProvider(reference_url, request_kwargs={"timeout": 3}))

node_block, ref_block = await asyncio.gather(

w3_node.eth.block_number,

w3_ref.eth.block_number,

)

return (ref_block - node_block) <= MAX_LAG

except Exception:

return False

if not asyncio.run(check_node_health(sys.argv[1], sys.argv[2])):

sys.exit(1)

Auto-restart on hang. Node can hang without crash. Watchdog: if block height unchanged for N minutes — restart service via systemd or Kubernetes restart policy.

Dashboards

Grafana dashboards by structure: Overview (all nodes, all networks, status at a glance), Per-network deep dive (detailed metrics per network), Validator performance (for staking nodes, including APR and slashing risks), Infrastructure (CPU/RAM/Disk per node).

For public RPC services — additional: request metrics (RPS, latency, error rate), rate limiting stats, top methods by load.

Development Timeline

| Component | Timeline |

|---|---|

| Basic exporters (EVM + 1–2 other networks) | 1–2 weeks |

| Prometheus + VictoriaMetrics + Grafana setup | 3–5 days |

| Alert rules + PagerDuty/Telegram integration | 2–3 days |

| Auto-failover for RPC | 1 week |

| Dashboards + documentation | 1 week |

Monitoring for 3–5 networks with basic dashboards and alerts — 3–4 weeks. Extended system with auto-remediation and custom exporters for non-standard protocols — 6–8 weeks.