Developing a Retry System for Bitrix24 Integrations

Integrations fail. An external API returns 503, the network hiccups, a banking service goes offline for maintenance. The question isn't whether the integration will fail, but what happens after it does. A retry system is automatic recovery: didn't work now—we'll try again in a minute, an hour, a day. If after N attempts it still fails—notify a human.

Principles That Cannot Be Violated

Idempotency. A retry attempt must produce the same result as the first, without side effects. If an operation creates a payment order in the bank—the repeated call mustn't create a second one. For this, use idempotency_key (unique operation UUID)—the bank or external system ignores duplicates with the same key.

Exponential backoff. First attempt—immediately. Second—after 1 minute. Third—after 4 minutes. Fourth—after 16 minutes. This prevents a storm of retry requests when a overloaded service recovers.

Jitter. Add a random component to the delay (±20%). If thousand operations fail simultaneously and all retry with identical delays—we get another storm. Jitter spreads the peak.

Maximum retry attempts. After N attempts (usually 5–10), the operation is marked as permanently failed. Then—manual intervention.

Queue Architecture with Retry

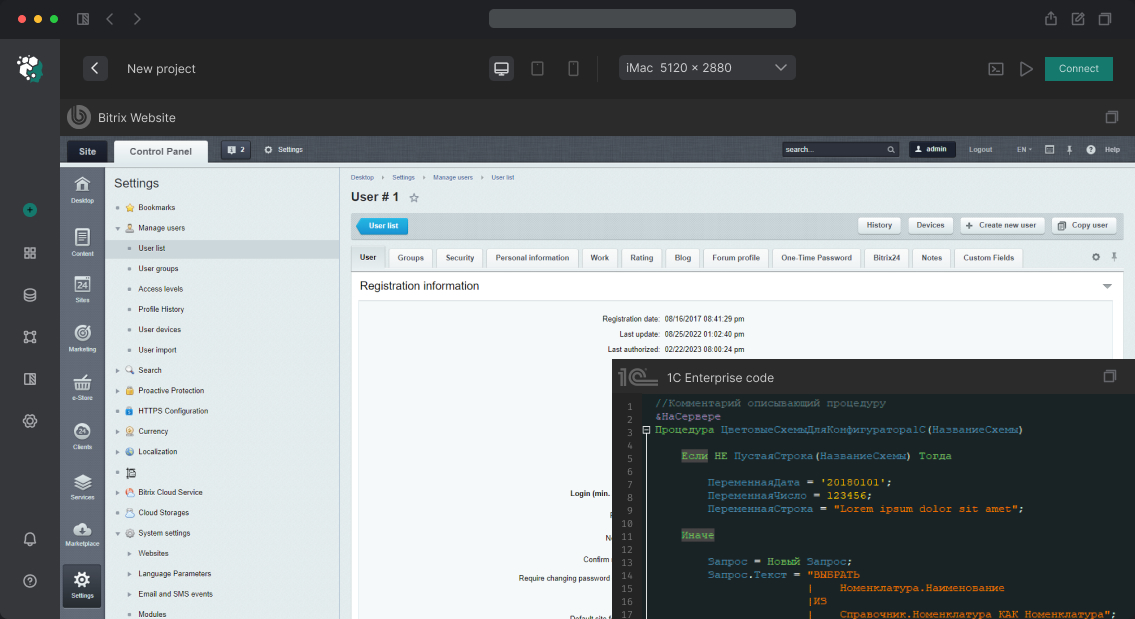

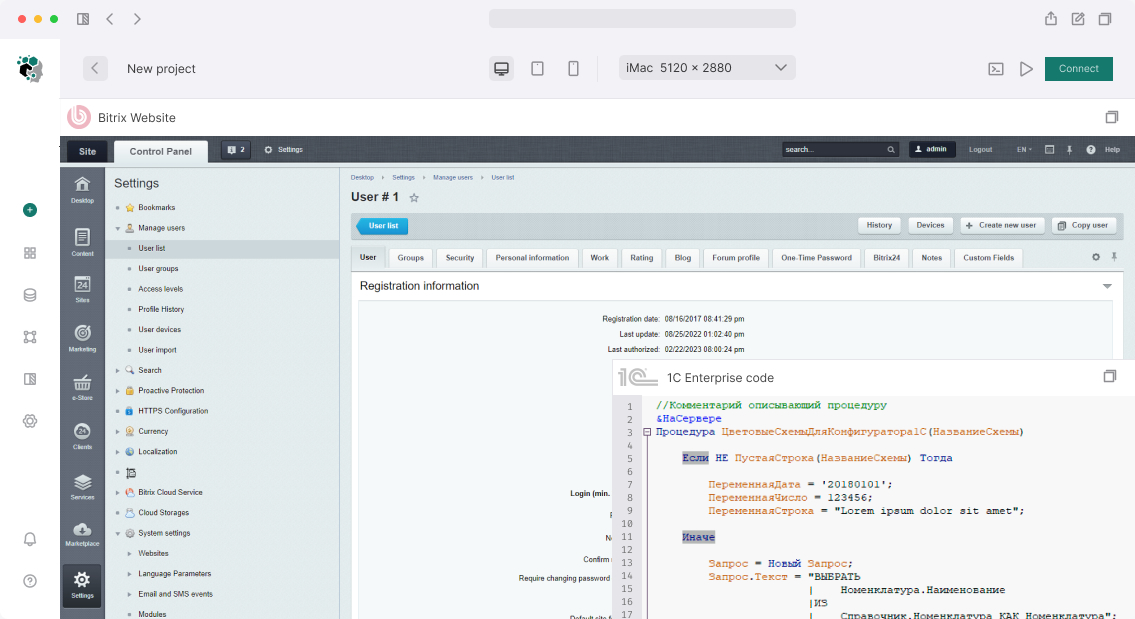

For cloud Bitrix24 (no server access), retry is implemented via:

- Bitrix agents (

\CAgent::AddAgent)—for simple scenarios with few operations - External service (separate PHP/Node.js server) with Redis Queue or RabbitMQ

For on-premise Bitrix24—agents or queue based on infoblock/HL-block.

Task structure in queue:

{

"id": "uuid-v4",

"type": "bank_payment_create",

"payload": {

"deal_id": 1234,

"amount": 50000,

"idempotency_key": "pay-uuid-v4"

},

"attempts": 2,

"max_attempts": 5,

"next_run_at": "2025-03-13T15:30:00Z",

"status": "pending",

"last_error": "Connection timeout"

}

Task table: integration_jobs in PostgreSQL or MySQL. Index on (status, next_run_at)—the worker picks tasks ready for execution.

Worker Implementation

Worker is a separate process, launched by cron every minute (or daemon via Supervisor). Algorithm:

// Grab a batch of tasks for execution (with FOR UPDATE SKIP LOCKED lock)

$jobs = JobRepository::getPending(limit: 10);

foreach ($jobs as $job) {

try {

$job->markRunning();

$handler = HandlerFactory::create($job->type);

$handler->execute($job->payload);

$job->markSuccess();

} catch (RetryableException $e) {

// Temporary error—schedule retry

$delay = $this->calcBackoff($job->attempts); // 2^attempts * 60 seconds

$delay += rand(0, (int)($delay * 0.2)); // jitter

$job->scheduleRetry($delay, $e->getMessage());

} catch (FatalException $e) {

// Business error—don't retry, notify

$job->markFailed($e->getMessage());

$this->notify($job);

}

}

FOR UPDATE SKIP LOCKED—mandatory with multiple workers. Without it, two workers might take one task and execute it twice.

Exception Classification

Correctly divide errors into "retry" and "don't retry":

| Error Type | Class | Retry |

|---|---|---|

| HTTP 429 (Rate Limit) | RetryableException |

Yes, long delay |

| HTTP 503 / 502 (Service Unavailable) | RetryableException |

Yes |

| Network timeout | RetryableException |

Yes |

| HTTP 401 (Unauthorized) | Special: refresh token, then retry | Yes, once |

| HTTP 400 (Bad Request) | FatalException |

No |

| HTTP 422 (Validation Error) | FatalException |

No |

| Duplicate operation (idempotency hit) | Success | — |

Dead Letter Queue

Tasks that exhaust retry limits move to Dead Letter Queue (DLQ)—separate table or queue. DLQ isn't a trash bin, it's a list of things requiring attention. Interface for DLQ:

- View failed tasks with complete attempt history

- Manual retry after fixing the error cause

- Edit payload (if data needs correction before retry)

- Batch retry of task groups

Bitrix24 Integration

On permanent error or threshold exceeded—notify responsible person in Bitrix24:

\CIMNotify::Add([

'MESSAGE_TYPE' => IM_MESSAGE_SYSTEM,

'TO_USER_ID' => $responsibleUserId,

'MESSAGE' => "Integration: operation #{$job->id} failed after {$job->attempts} attempts. " .

"Error: {$job->last_error}. Manual intervention required.",

]);

Or via REST API im.notify.system.add if notification is sent from external service.

Queue Monitoring

| Metric | What It Shows |

|---|---|

pending_jobs_count |

Current load, size of unexecuted tasks |

failed_jobs_count |

Accumulated error debt |

avg_retry_count |

Average attempts until success |

p99_execution_time |

Worker performance |

dlq_size_delta |

DLQ growth or shrinkage |

Development Stages

| Stage | Content | Timeline |

|---|---|---|

| Design | Data schema, error classification, backoff strategy | 2–3 days |

| Task table and repository | CRUD, locks, indexes | 2–3 days |

| Worker | Core logic, exception handling | 3–5 days |

| DLQ and interface | Viewing, manual retry | 3–5 days |

| Notifications | Bitrix24 IM integration | 1–2 days |

| Monitoring | Metrics, dashboard | 2–3 days |

A retry system is a mandatory component of any production integration. Without it, every external service failure turns into lost operations and manual recovery work.